Group-specific estimates

Difficulty

Discrimination

Latent-variable mean and variance

Constraints

Fixed value

Equality

IRT model-based test of differential item functioning

Many researchers study cognitive abilities, personality traits, attitudes, quality of life, patient satisfaction, and other attributes that cannot be measured directly. To quantify these types of latent traits, researchers develop instruments–questionnaires or tests consisting of binary, ordinal, or categorical items–to determine individuals' levels of the trait.

Item response theory (IRT) models can be used to evaluate the relationships between a latent trait and items intended to measure the trait. With IRT models, we can determine which test items are more difficult and which ones are easier. We can determine which test items provide much information about the latent trait and which ones provide only a little.

Stata's IRT suite fits IRT models and can make comparisons across groups. This means we can evaluate whether an instrument measures a latent trait in the same way for different subpopulations. For instance, we might ask the following questions:

Do students from urban and rural schools perform differently on a test intended to measure mathematical ability?

Does an instrument measuring depression perform the same today as it did five years ago?

Does one of the questions on a survey about patient satisfaction measure the trait differently for younger and older patients?

In other words, multiple-group IRT models allow us to evaluate differential item functioning (DIF).

To fit multiple-group IRT models in Stata, we simply add the group() option to the irt command.

We previously fit a two-parameter logistic (2PL) model for item1 through item10 by typing

. irt 2pl item1-item10

We now type

. irt 2pl item1-item10, group(urban)

to fit a multiple-group version of this model allowing differences for students in urban and rural schools.

We can add the group() option to any of the following irt commands and fit multiple-group models for binary, ordinal, and categorical responses.

| irt 1pl | One-parameter logistic model |

| irt 2pl | Two-parameter logistic model |

| irt 3pl | Three-parameter logistic model |

| irt grm | Graded response model |

| irt nrm | Nominal response model |

| irt pcm | Partial credit model |

| irt rsm | Rating scale model |

| irt hybrid | Hybrid IRT model |

We can also impose constraints on these models to analyze differential item functioning (DIF).

After fitting a multiple-group model, we can easily graph the item characteristic curves, item information functions, and more.

We have data on student responses to nine math test items, item1–item9. The responses are coded 1 for correct and 0 for incorrect. There are 761 students from rural areas and 739 students from urban areas.

We can fit a two-parameter logistic model and allow for differences across urban and rural subpopulations by typing

. irt 2pl item1-item9, group(urban)

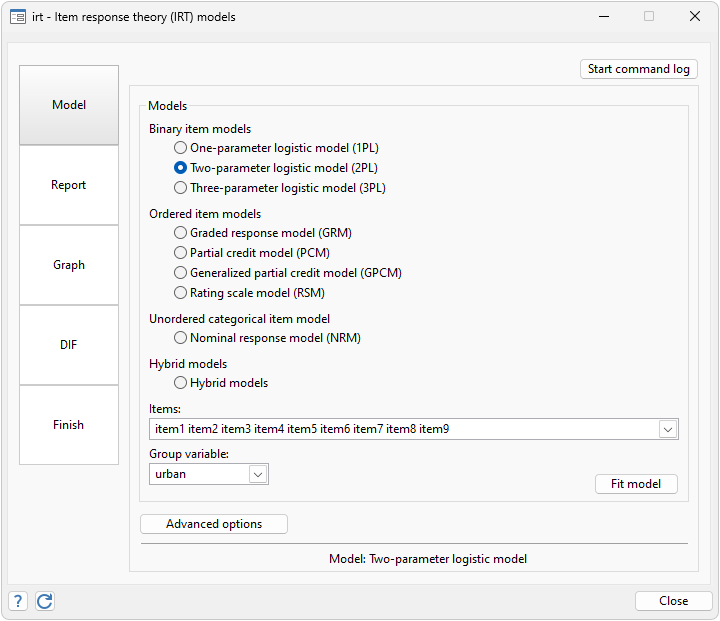

Or we can use the IRT Control Panel

This produces estimates of the discrimination and difficulty parameters for each item as well as estimates of the mean and variance of the latent trait. Standard errors, tests, and confidence intervals are also reported. But we will not show all of that here. Instead, we use the estat greport command to display a compact table with side-by-side comparisons of the parameter estimates for each group.

. estat greport

| Parameter | rural urban | |

| item1 | ||

| Discrim | .77831748 .77831748 | |

| Diff | .36090796 .36090796 | |

| item2 | ||

| Discrim | .75331293 .75331293 | |

| Diff | -.08075248 -.08075248 | |

| item3 | ||

| Discrim | .90118513 .90118513 | |

| Diff | -1.7362731 -1.7362731 | |

| item4 | ||

| Discrim | 1.2811154 1.2811154 | |

| Diff | -.57762574 -.57762574 | |

| item5 | ||

| Discrim | 1.1852458 1.1852458 | |

| Diff | 1.3545593 1.3545593 | |

| item6 | ||

| Discrim | .99178782 .99178782 | |

| Diff | .68720188 .68720188 | |

| item7 | ||

| Discrim | .58759278 .58759278 | |

| Diff | 1.617199 1.617199 | |

| item8 | ||

| Discrim | 1.1989038 1.1989038 | |

| Diff | -1.7652768 -1.7652768 | |

| item9 | ||

| Discrim | .65797409 .65797409 | |

| Diff | -1.5426014 -1.5426014 | |

| mean(Theta) | 0 -.2156593 | |

| var(Theta) | 1 .79374349 | |

By default, all the difficulty and discrimination parameters are constrained to be equal across groups. We obtain distinct estimates only of the mean and variance of the latent trait (Theta); both the mean and variance are smaller for the urban students.

Let's store the results of this model.

. estimates store constrained

Now suppose we are concerned that item1 is unfair–that it tests mathematical ability differently for urban and rural students. Perhaps it uses language that is likely to be unfamiliar to one of these groups. We can fit a model that allows the difficulty and discrimination parameters to differ across groups by typing

. irt (0: 2pl item1) (1: 2pl item1) (2pl item2-item9), group(urban)

This is an example of irt's hybrid syntax, which allows us to specify different types of IRT models for different items and even for different groups. In our case,

(0: 2pl item1) says that we estimate one set of parameters for item1 in group 0, the rural group.

(1: 2pl item1) says that we estimate a different set of parameters for item1 in group 1, the urban group.

The result, displayed with estat greport, is

. estat greport

| Parameter | rural urban | |

| item1 | ||

| Discrim | .56531696 1.1252177 | |

| Diff | .2735678 .37061701 | |

| item2 | ||

| Discrim | .77447173 .77447173 | |

| Diff | -.0628632 -.0628632 | |

| item9 | ||

| Discrim | .6725579 .6725579 | |

| Diff | -1.4920764 -1.4920764 | |

| mean(Theta) | 0 -.17731632 | |

| var(Theta) | 1 .71409887 | |

We now see the distinct discrimination and difficulty estimates for item1. In particular, item1 appears to have a substantially greater ability to discriminate between high and low values of mathematical abilities for students in urban areas.

We can see this graphically if we type

. irtgraph icc item1

The steeper slope in the item characteristic curve for the urban group is reflective of item1's greater discriminating ability in this group.

So far, we have not formally tested whether the discrimination or difficulty for item1 differ across groups. We can test this hypothesis using a likelihood-ratio test.

We first store the results of this model:

. estimates store dif1

Then we use the lrtest command to test for differences.

. lrtest constrained dif1 Likelihood-ratio test Assumption: constrained nested within dif1 LR chi2(2) = 12.00 Prob > chi2 = 0.0025

We reject the null hypothesis that both discrimination and difficulty are equal across the two groups. At least one is different, but this test does not tell whether discrimination, difficulty, or both differ.

The cns() option is another feature in Stata's IRT suite. With cns(), we can specify constraints for both standard and multiple-group IRT models. In our example, the cns() option allows us to test specifically for a difference in the difficulty of item1 across the two groups.

We add the cns(a@c1) option to each of our specifications for item1 to constrain the discrimination. Here a refers to discrimination, and c1 is the name of the constraint. We could replace c1 with mycons or any other name.

We continue to allow the difficulty to vary by group.

. irt (0: 2pl item1, cns(a@c1)) (1: 2pl item1, cns(a@c1)) (2pl item2-item9), group(urban) <omit results>

We store the results of this model by typing

. estimates store dif2

and we perform a likelihood-ratio test comparing this with the model with both difficulty and discrimination constrained.

. lrtest constrained dif2 Likelihood-ratio test Assumption: constrained nested within dif2 LR chi2(2) = 12.00 Prob > chi2 = 0.0025

Using a 5% significance level, we reject the hypothesis that the difficulties are equal.

Learn more about Stata's IRT features.

Read more about group IRT modeling in the Item Response Theory Reference Manual; see [IRT] irt, group().

Read more about IRT constraints in the Item Response Theory Reference Manual; see [IRT] irt constraints.